Quick Read

- Lee Jung-jae’s agency denied being investigated amid false AI manipulation rumors.

- Actor Lee Yi-kyung was targeted by AI-generated deepfakes, damaging his reputation.

- A Baltimore student was handcuffed after an AI gun detector mistook his Doritos bag for a weapon.

- Slamcore launched an AI system to make forklifts safety-aware, aiming to reduce industrial accidents.

AI Manipulation Crimes Shake the Entertainment Industry

Artificial intelligence, once the darling of innovation, is now under scrutiny as its influence spreads across sectors. Nowhere is this more evident than in entertainment, where AI-driven manipulation has become a new breed of crime. Recently, South Korean superstar Lee Jung-jae found himself at the center of swirling rumors. Reports suggested financial authorities were investigating his agency for unfair transactions. However, Artist Company, representing Lee, swiftly denied these claims, clarifying that neither Lee nor his agency were subjects of any investigation. Their statement stressed, “Lee Jung-jae was completely unrelated to any illegal activities.” Chosun Ilbo covered the agency’s commitment to pursue legal action against anyone found to be involved in information leakage or market manipulation.

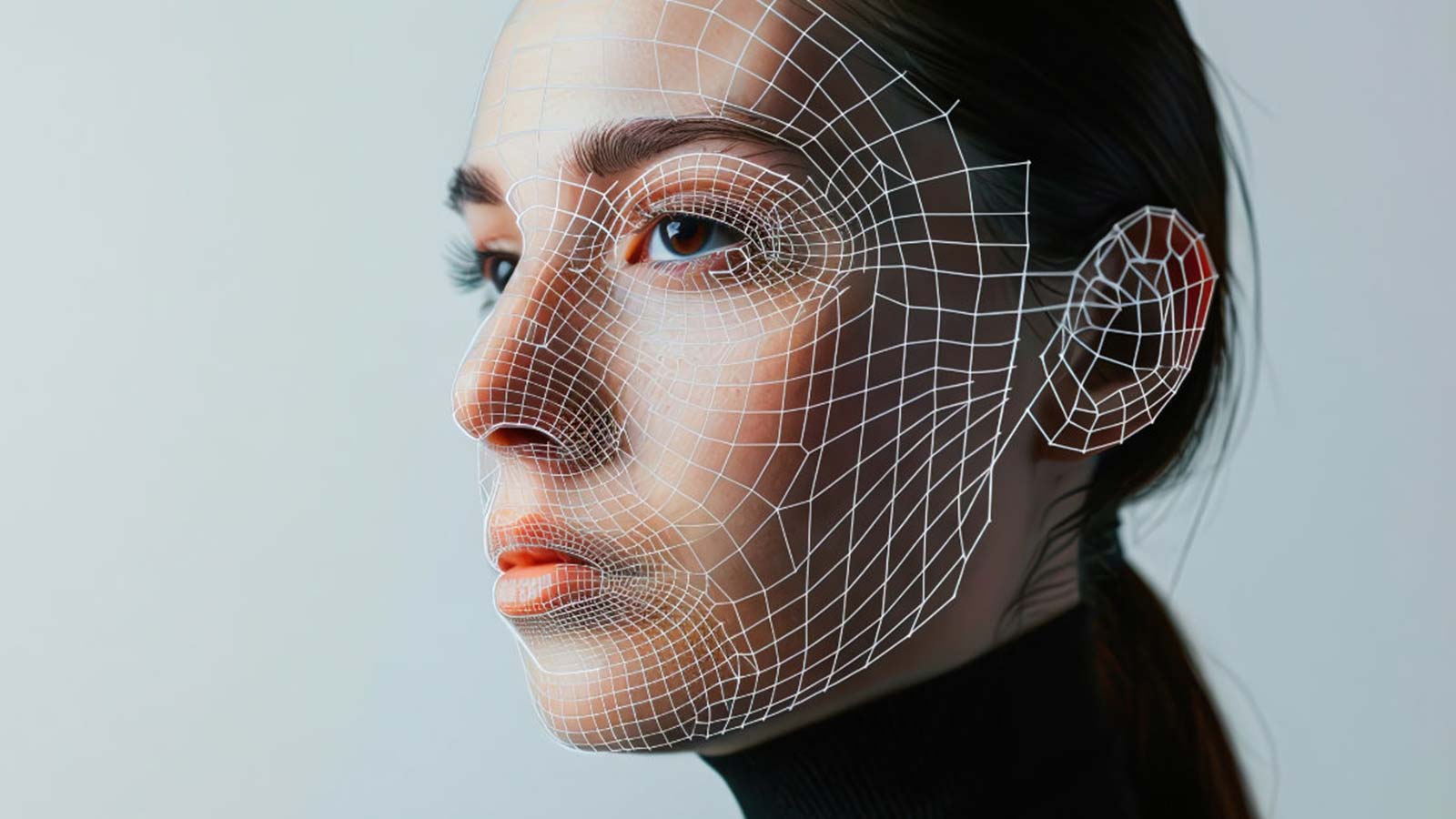

But this is only the tip of the iceberg. Actor Lee Yi-kyung became a victim of AI manipulation when someone created and circulated AI-generated conversations and photos. The fabricated content, which falsely depicted Lee in compromising situations, sparked public outrage. The agency revealed that a ‘writer’ had sent a blackmail email months prior, only to later claim it was meant to warn others. Eventually, the individual admitted to starting the AI hoax as a joke, but the damage was done. As rumors spread, netizens voiced their frustration, demanding stricter punishment for those who use AI to destroy reputations. Experts have warned that with celebrities’ faces so easily accessible online, they remain prime targets for AI-driven image manipulation. The industry now stands on high alert, seeking urgent legal and technical safeguards.

AI False Alarms: When Security Goes Awry

While the entertainment world battles deepfakes, another AI-driven alert system made headlines in Baltimore. A simple snack turned into a near-disaster when a school’s AI-powered gun detection system mistook a teenager’s Doritos bag for a firearm. Taki Allen, a high school student, was sitting with friends when police swarmed the campus, responding to the AI alert. “They made me get on my knees, put my hands behind my back, and cuffed me,” Allen told WBAL-TV 11 News. The ordeal was harrowing, as officers searched Allen only to find nothing but a crumpled bag of chips. School officials later explained that the AI had flagged a suspicious item, triggering the emergency response. The incident left students shaken, prompting the school to offer counseling and support.

Neither the school nor law enforcement has definitively confirmed the role of the Doritos bag, but details from The Guardian and Gizmodo paint a troubling picture. The AI system, provided by Omnilert, is designed to detect potential weapons using school cameras. Yet, the Baltimore case highlights a significant flaw: AI’s inability to reliably distinguish between harmless objects and real threats. Allen’s grandfather, Lamont Davis, summed up the fears of many parents, saying, “Nobody wants this to happen to their child.”

Industrial Safety: AI’s Double-Edged Sword in Warehouses

AI’s reach extends beyond schools and entertainment into the heart of industrial workplaces. Warehouses, often bustling with human and machine activity, face their own set of dangers. Slamcore, a UK-based developer, recently launched Slamcore Alert—a system designed to transform existing forklifts into safety-aware machines. By integrating AI-enabled cameras, these vehicles can now detect pedestrians and alert operators to avoid accidents. This technology promises to reduce the alarming statistic: about two people die every week in the US due to forklift accidents, according to Robotics & Automation News.

Owen Nicholson, Slamcore’s CEO, emphasizes that retrofitting current equipment with AI “empowers human-controlled, existing machines with ‘robot eyes’ to give them the precise spatial perception they need to operate effectively and safely.” Unlike fully autonomous robots, Slamcore Alert is designed for immediate use, offering accident prediction and prevention without expensive overhauls. The system complements Slamcore Aware, their real-time location solution, making warehouses safer while optimizing workflow. Yet, as with all AI, questions remain about accuracy, reliability, and the potential for false positives.

The Challenge: Balancing AI’s Promise with Its Risks

Across these stories, a common thread emerges: AI is reshaping how we respond to threats, real and imagined. In entertainment, deepfakes and synthetic content threaten reputations and livelihoods. In schools, well-intentioned security systems can misfire, turning innocent moments into traumatic events. In industry, AI offers life-saving potential but also introduces new uncertainties.

Experts argue that urgent legal frameworks are needed. Celebrities, students, and workers deserve protection from AI’s pitfalls. But the solution isn’t to halt progress—it’s to demand accountability, transparency, and smarter design. As AI continues to evolve, society faces a pivotal question: how do we harness its benefits while preventing its misuse?

AI’s expanding role in public and private life presents both a promise and a peril. Its power to protect is real, but as recent events in entertainment, education, and industry reveal, unchecked algorithms can inflict genuine harm. Only through vigilant oversight and thoughtful regulation can AI’s potential be realized without sacrificing safety or dignity.