Quick Read

- Google accidentally leaked its experimental COSMO AI assistant, which utilizes deep-level Android accessibility permissions.

- The app features advanced automation tools that raise significant concerns about user privacy and data surveillance.

- Experts emphasize that as AI assistants gain more autonomy, the industry must prioritize transparency and accountability to mitigate risks in a post-truth landscape.

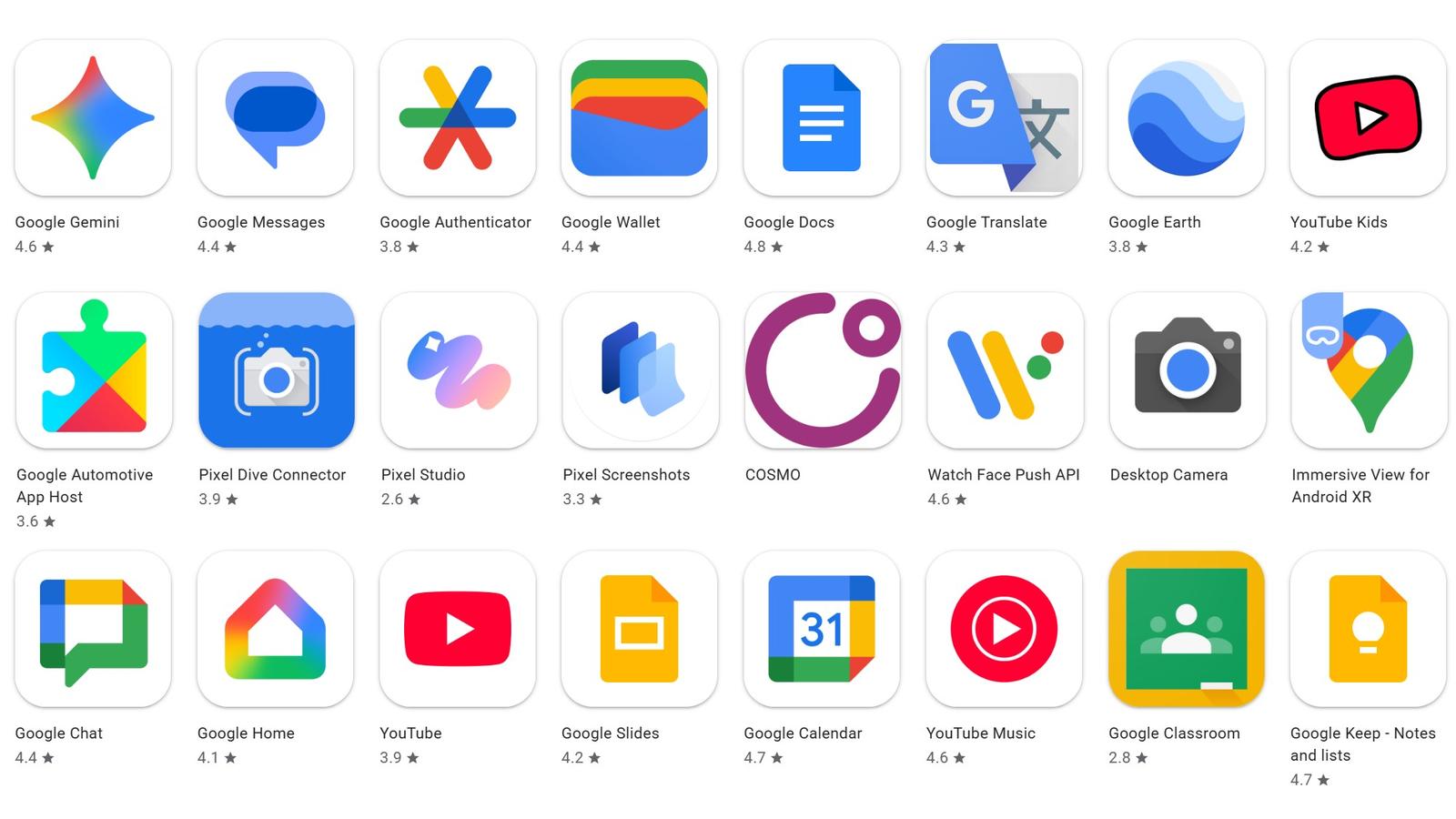

Google briefly exposed its upcoming experimental AI assistant, COSMO, on the Play Store this week, providing a rare glimpse into the company’s aggressive strategy to integrate deep web automation into daily mobile use. The app, which was removed hours after its May 1 appearance, features advanced tools like a Browser Agent and automated conversation summarization, raising immediate questions about the balance between convenience and data surveillance.

The Stakes of Deep Integration and Data Access

The COSMO app, identified by the package name com.google.research.air.cosmo, utilizes Android’s AccessibilityService API. This permission level grants the assistant deep access to screen content, a capability that security researchers have long warned could be exploited if not managed with absolute transparency. By bundling a local Gemini Nano model for offline processing alongside server-side inference, Google is attempting to solve the latency issues that plague current assistants, but the cost is a massive increase in the depth of personal data the system can ingest.

Algorithmic Accountability in a Post-Truth Era

The leak arrives amid a broader, industry-wide reckoning regarding the safety of generative AI. OpenAI CEO Sam Altman recently acknowledged that the hypothetical potential for irreversible harm from rapidly deployed AI models remains a primary concern for developers. This sentiment mirrors growing anxieties among digital rights advocates who argue that as assistants like COSMO become more autonomous—gaining the ability to track lists, suggest calendar events, and browse the web—the risk of algorithmic manipulation increases. In an environment where personal assistants act as gatekeepers to information, the lack of clear accountability for AI-generated errors or privacy breaches poses a significant threat to digital literacy.

Global Security and the Cost of Negligence

The tech industry’s rapid deployment of these tools is happening against a backdrop of increasing financial and criminal risks. Recent international law enforcement operations, including a massive FBI-led crackdown on global scam compounds, have exposed how easily bad actors exploit trust-based interfaces to defraud users. Furthermore, the $8 million fine levied against Royal Sovereign International for failing to report defective products serves as a stark reminder of the consequences when corporations prioritize market speed over consumer safety. These events underscore why transparency—not just in software updates but in corporate reporting—is essential for maintaining public trust in the digital age.

The emergence of hyper-capable AI assistants like COSMO necessitates a shift from passive user acceptance to active digital skepticism; as these tools gain the technical capacity to act on behalf of the user, the burden of proof for safety and data protection must move from the consumer to the developer, requiring rigorous, independent audits of the underlying models.